Finding Deep Neural Network Vulnerabilities

ECE Assistant Professor Xue “Shelley” Lin designed a t-shirt to prevent a deep neural network from detecting it, which will allow them to improve the security of the systems.

This T-shirt could make you invisible (to deep neural networks)

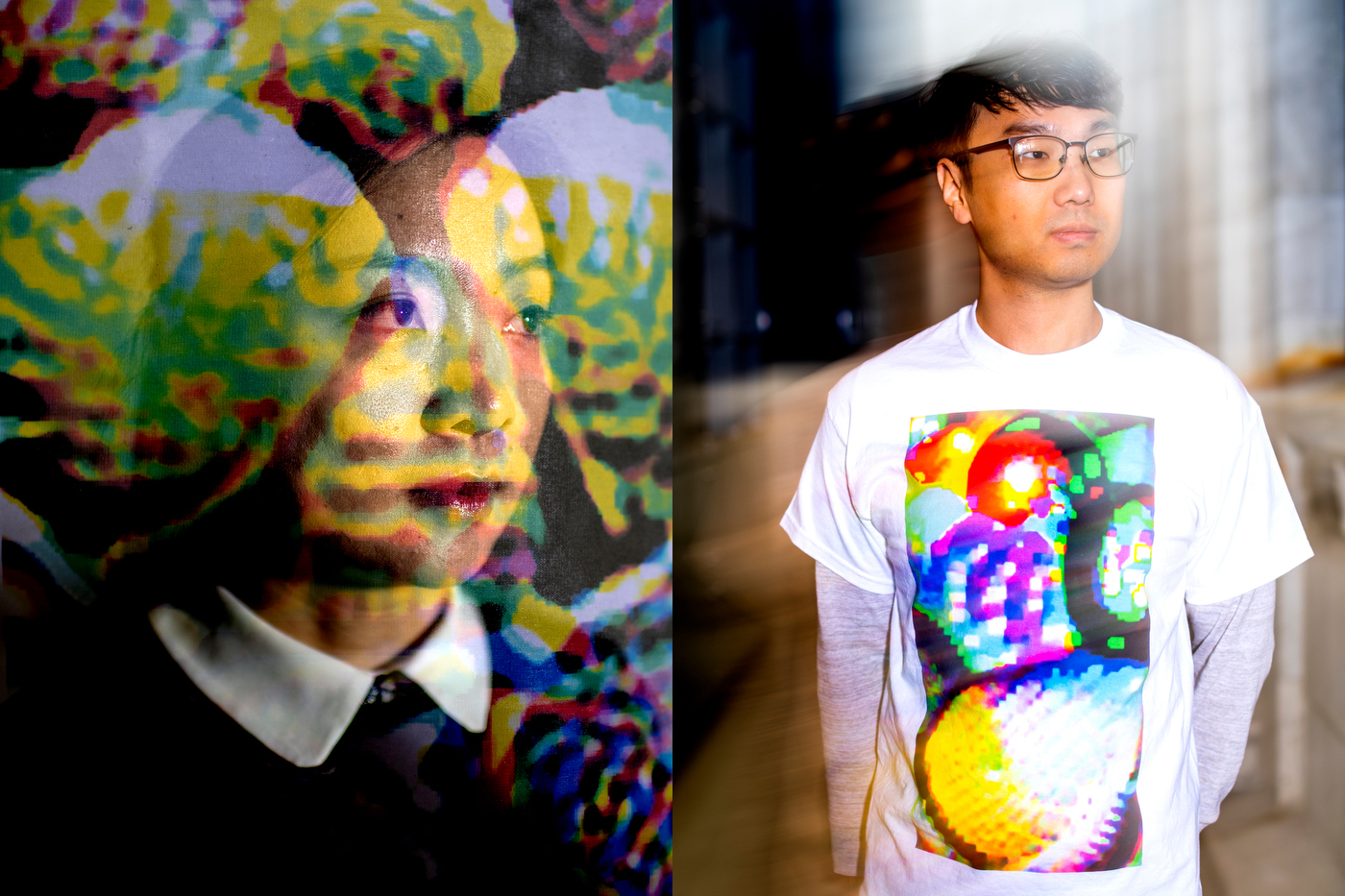

Main photo: The pattern on this shirt prevents specific deep neural networks from being able to recognize that the wearer is a person. Photo by Matthew Modoono/Northeastern University

Two men walk side-by-side toward a camera. One wears an all-black outfit. The other is in khakis and a white T-shirt with a brightly colored, abstract pattern centered on the front. But the artificial intelligence analyzing the video only reports one person.

The person in the T-shirt is invisible.

The pattern on the white shirt, which looks like something you might’ve seen at a Grateful Dead concert, was designed by researchers at Northeastern, IBM, and the Massachusetts Institute of Technology, to deceive the specific deep neural network analyzing the footage.

“Deep neural networks are very powerful, but also can be vulnerable to adversarial attacks,” says Shelley Lin, an assistant professor of electrical and computer engineering at Northeastern. “When you wear the T-shirt, it’s highly possible that the deep neural network won’t identify you in an image.”

Shelley Lin, an assistant professor of electrical and computer engineering at Northeastern, and Kaidi Xu, a doctoral student in her lab, helped devise the pattern. Photos by Matthew Modoono/Northeastern University

Deep neural networks are a type of artificial intelligence that is often used to identify and classify images, sounds, or other inputs, by recognizing patterns. Researchers train these algorithms with millions of examples until they are able to identify an individual’s voice or add decades to the face in a selfie.

But knowing the details of how a specific network has been trained makes it possible to work backwards to create images that could cause the neural network to identify, say, a panda as a gibbon.

Lin and her colleagues are working with deep neural networks trained as object detectors, which means they are able to pick out, and correctly label, shapes in a video as “person” or “horse” or “umbrella.” The researchers wanted to design an image that would make a person undetectable.

In a digital space, this is relatively straightforward: Researchers can find and alter the value of specific pixels within an image to confuse the neural network. Making these attacks work in the real world is harder, but researchers have already shown that a few well-placed stickers on a stop sign could make an artificial-intelligence system see “Speed Limit 45”—a potentially deadly mistake.

|  |

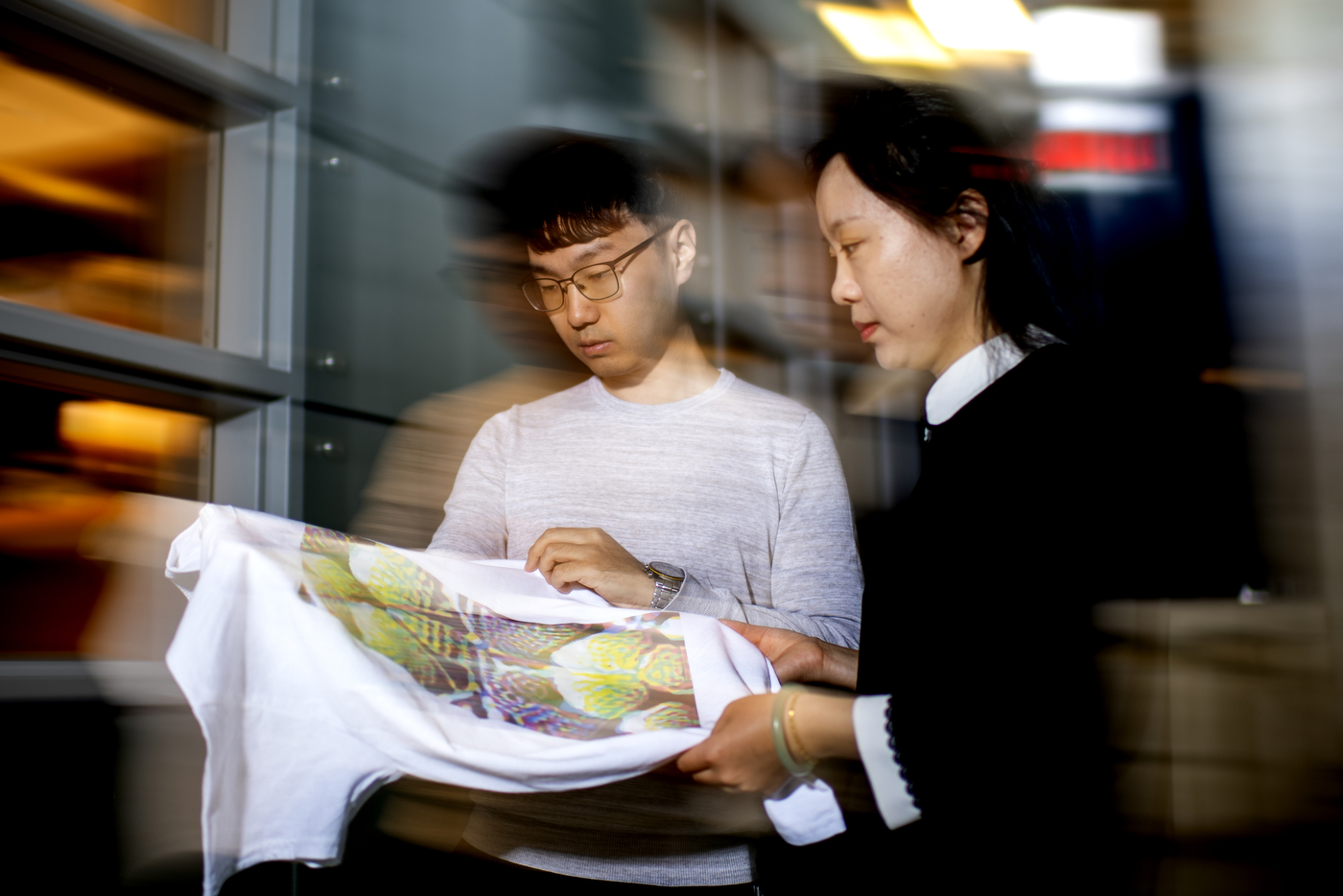

Before printing their pattern, the researchers had to account for the way a T-shirt moves and wrinkles in the real world. Photos by Matthew Modoono/Northeastern University

But a stop sign is a flat, stationary surface. Designing a pattern that can twist and warp with a T-shirt and still deceive a neural network is significantly more challenging.

“We had to model the transformation of the shirt while people walk, and use that in our calculations,” Lin says. “Our mathematical problems had to model how the shape changes.”

Lin and her colleagues recorded a person walking in a T-shirt printed with a checkerboard pattern. By tracking the corners of each square, they were able to map out how the shirt moved and wrinkled. Then they factored that information into their adversarial design before printing it.

The result was a shirt that kept the wearer from being spotted more than 60 percent of the time. Not perfect, but impressive nonetheless.

Of course, the researchers aren’t trying to create a T-shirt that would allow the ultimate superspy to stroll past the surveillance cameras of tomorrow. They want to find these holes and fix them.

“The ultimate goal of our research is to design secure deep-learning systems,” Lin says. “But the first step is to benchmark their vulnerabilities.”

, News @ Northeastern